If you’ve been paying attention to the AI software space lately, you’ve probably heard former Tesla AI Director Andrej Karpathy use the term “Vibe Coding.” It is an intoxicating, almost magical idea: We are moving past the era of strict syntax and semicolons. Instead, you just describe the “vibe” or the overarching intent of your application in natural language, and the AI handles the execution.

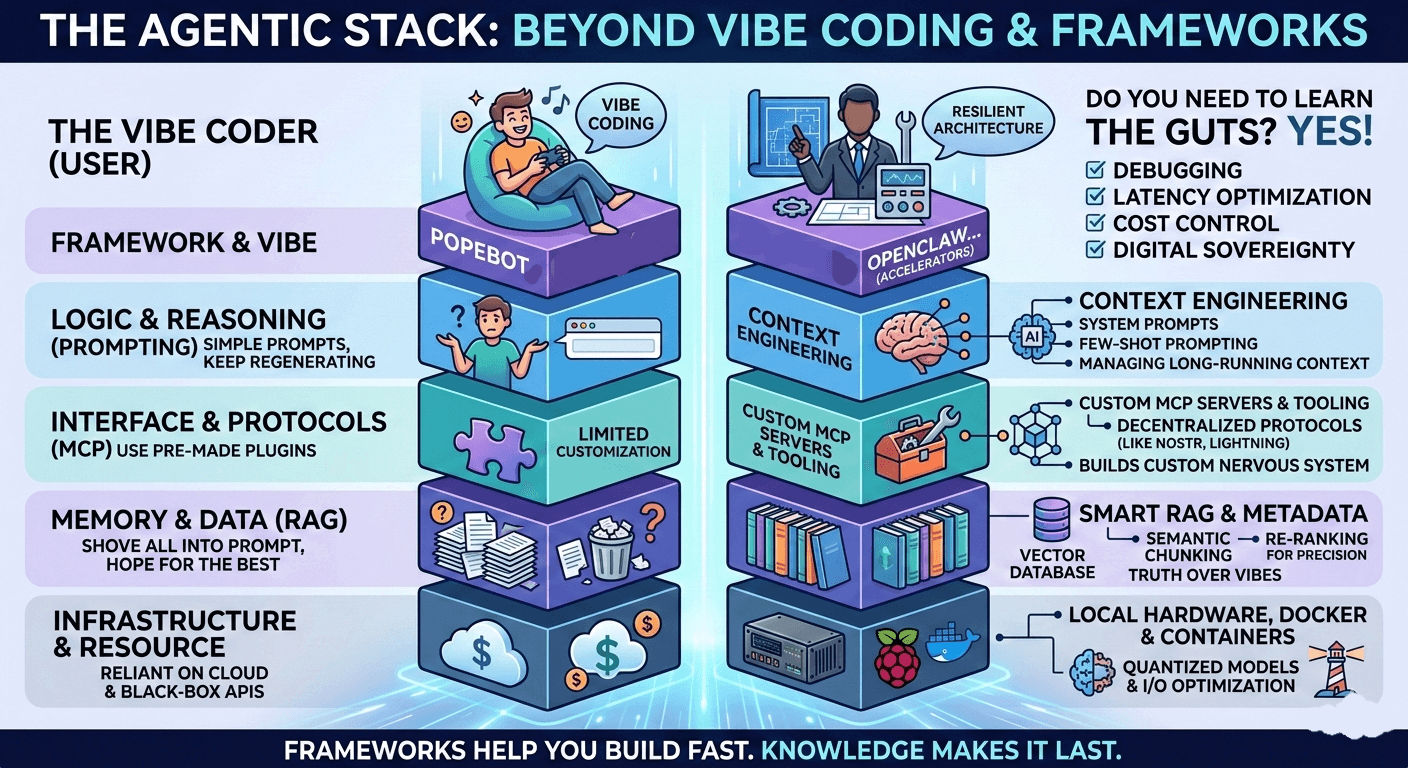

Combine this natural language prompting with powerful agentic frameworks like PopeBot or OpenClaw, and it is incredibly easy to reach a tempting conclusion. You might think, “We don’t need to learn the underlying plumbing anymore. Why study LLM architectures, RAG, or the Model Context Protocol (MCP) when the framework and the vibes can do all the heavy lifting?”

As I have been building out autonomous agents for KheAi, I’ve had to confront this exact question daily. The truth I’ve discovered is a bit of a paradox: These frameworks make building faster than ever, but they make understanding the foundational tech more critical than ever.

Relying purely on “vibes” and frameworks is a trap. Here is exactly why, and how understanding the guts of these systems will become your ultimate competitive edge in an AI-saturated market.

Phase 1: The Trap of the “Leaky Abstraction”

In software engineering, there is a concept called a “leaky abstraction.” An abstraction is meant to hide complex details (like how a car engine works) behind a simple interface (like a steering wheel and gas pedal). But an abstraction “leaks” when the messy reality of the underlying system pokes through the simplified interface, forcing you to deal with the complexity anyway.

AI Frameworks are massive, towering abstractions. When everything goes right, they feel like pure magic. But when things break—and they always do—you are suddenly staring at a wall of context-length errors, hallucinated tool calls, and infinite loops.

At its core, the mechanics of “vibe coding” usually follow a deceptive cycle:

- Prompting: You describe a feature to an AI.

- Implementation: You copy the generated code into your project.

- Validation: You observe that the code “runs” and passes basic tests.

- Deployment: You ship the feature based on the “vibe” that it works.

If you are just vibe coding through this cycle, you have no underlying mental model. When your AI-generated search feature catastrophically crashes your database under heavy load because it lacked debouncing or caching, natural language cannot save you. You cannot prompt your way out of a system you do not fundamentally understand.

The Debugging Trap and Kernighan’s Law

The perceived speed of vibe coding is a mathematical illusion. While the initial “writing” phase is lightning fast, the total lifecycle—from idea to stable, maintainable production code—often takes much longer.

- The 90-Minute Edge Case: Code written in ten seconds by an AI often requires ninety minutes of debugging to fix edge cases the model simply didn’t consider.

- Kernighan’s Law: This famous programming adage states that debugging is twice as hard as writing the code in the first place. Therefore, if you write code at the absolute limit of your understanding, you are, by definition, not smart enough to debug it. When the code is written beyond your understanding by an AI, debugging becomes nearly impossible.

Phase 2: Mastering the Engine (The Three Pillars)

To build agents that are actually autonomous, resilient, and useful, you have to look past the framework’s simple interface and master the core pillars beneath it.

1. Prompting is Now “Context Engineering”

We have officially moved past simple “you are a helpful assistant” prompts. In a modern framework driven by GitHub Actions or Next.js server actions, you are managing a massive, continuous flow of automated context.

If you don’t deeply understand token limits, attention mechanisms, or the “lost in the middle” phenomenon (where LLMs forget information buried in the middle of a long prompt), your agent will silently forget its core instructions during long-running tasks. The framework will pass the messages back and forth, but you have to engineer the logic of how that memory is structured and prioritized.

2. RAG (Retrieval-Augmented Generation) is for Truth

A framework will happily connect your AI agent to a database. But it won’t tell you why your agent is pulling completely irrelevant data to answer a user’s query.

RAG isn’t just “Google search for AI.” It requires a deep understanding of data preparation. You need to know about chunking strategies (how you break text apart), vector embeddings (how text is turned into math), and reranking (how results are prioritized). If you don’t know how to tune your vector database, your agent won’t be grounded in reality—it will just confidently “vibe” its way into massive hallucinations.

3. MCP (Model Context Protocol) is Your Nervous System

MCP is the standardized plumbing that allows AI models to talk to local files, external APIs, and tools safely. Frameworks give you great pre-built tools, but what happens when you need your agent to interact with something custom?

What if you want your agent to talk to a Nostr relay, a Lightning Network node, or a local Docker container? If you don’t understand the protocol itself, you are trapped inside the framework’s walled garden. Understanding MCP lets you build custom nervous systems for your agents, allowing them to truly read, write, and act in the digital economy.

Phase 3: The Hardware Reality (Why Sovereignty Demands Knowledge)

This gap between “vibes” and “reality” becomes acutely obvious when you step outside the comfort of unlimited cloud budgets and black-box APIs.

If your goal is true digital sovereignty—running autonomous web 4.0 actors on resilient, local hardware—you absolutely cannot afford to be ignorant of the stack. When I deploy an agent to a Raspberry Pi 4B for KheAi, I can’t just throw a massive, unoptimized LLM at it and hope for the best. Physics and hardware constraints apply.

- Resource Constraints: You have to know exactly which quantized models (compressed AI models) can physically fit into 8GB of RAM while still leaving room for the operating system.

- I/O Bottlenecks: You have to understand hardware read/write cycles. Booting from a proper SSD is mandatory for agentic reasoning tasks. If you try to run a local vector database on a standard microSD card, the intense read/write load will corrupt the card remarkably fast.

Vibe coding assumes infinite compute. Sovereign engineering requires disciplined architecture.

Phase 4: The Architect vs. The Vibe Coder

Think of the modern agentic stack as a hierarchy. The framework sits at the very top, but it relies entirely on the structural integrity of everything beneath it. Here is how the two mindsets compare:

| Capability | The “Vibe Coder” (User) | The Sovereign Engineer (Architect) |

|---|---|---|

| Tooling | Uses pre-packaged plugins; hopes they don’t deprecate. | Writes custom MCP servers to bridge agents to specialized, decentralized protocols. |

| Memory | Shoves everything into the prompt until the context window breaks. | Implements optimized RAG pipelines with semantic chunking to preserve token efficiency. |

| Debugging | Keeps regenerating responses hoping the AI magically fixes itself. | Reads the LLM reasoning traces to identify logical fallacies and latency bottlenecks. |

| Infrastructure | Fully reliant on centralized cloud providers and API subscriptions. | Can deploy containerized agent swarms via Docker onto local, sovereign hardware. |

The Senior Developer’s Strategic Framework

Senior developers and architects do not avoid AI; rather, they use it as an amplifier, not a replacement. Their strategy follows a rigorous hierarchy:

- Problem Design First: They map out the architecture, the data flow, and the logic before ever writing a prompt.

- Boilerplate Delegation: AI is used strictly for repetitive, low-risk tasks that the developer already understands perfectly (e.g., config files, test scaffolding, common CSS).

- Exploration, Not Execution: They use AI to brainstorm multiple approaches to a problem, then use their human expertise to evaluate trade-offs and security implications.

- The “Explain Every Line” Rule: You must be able to explain the purpose and trade-offs of every single line of code in your project, regardless of whether you typed it or an AI generated it.

The Verdict: Choosing Your Trajectory

We are at a crossroads, and there are two distinct paths for developers in an AI-saturated market.

The Path of Least Resistance relies on continuous vibe coding. This leads to a hard career ceiling. These developers will become increasingly replaceable as AI advances because they lack the deep systems intuition required to solve complex, high-stakes problems when the servers are on fire.

The Path of Deep Understanding involves intentionally slowing down to build fundamental skills. By reading documentation instead of relying solely on prompts for core logic, you build the “pattern recognition” muscles that make you irreplaceable.

Frameworks like PopeBot and paradigms like Vibe Coding are incredible power tools. They strip away the tedium of boilerplate code and let you iterate at the speed of thought. But tools do not replace the craftsman. Mastering LLM architecture, RAG pipelines, and MCP isn’t outdated; it’s the exact knowledge that separates the people who use AI from the people who command it.

Your Next Step (The Anti-Vibe Exercise)

If you want to escape the vibe coding trap today, try this: Take a small feature you previously built with the help of AI. Delete it. Now, rebuild it entirely from scratch using only official documentation and your own logic.

It will be slower. It will be frustrating. But it will force you to confront every trade-off and decision, turning fragile “vibes” into permanent, sovereign expertise.