After peeling back the layers of recent AI developments and researching the underlying engineering, I realized we are fundamentally misunderstanding what is happening right now. Most of us are still treating AI like a super-powered calculator—a tool we open, use, and close. But the reality is far more profound.

We are actively transitioning from a world where humans just use AI, to a world where we coexist with it. Welcome to the era of the Agentic Social Network (ASN).

Here is my deep dive into how AI is stepping out of the browser and into our social fabric, step-by-step.

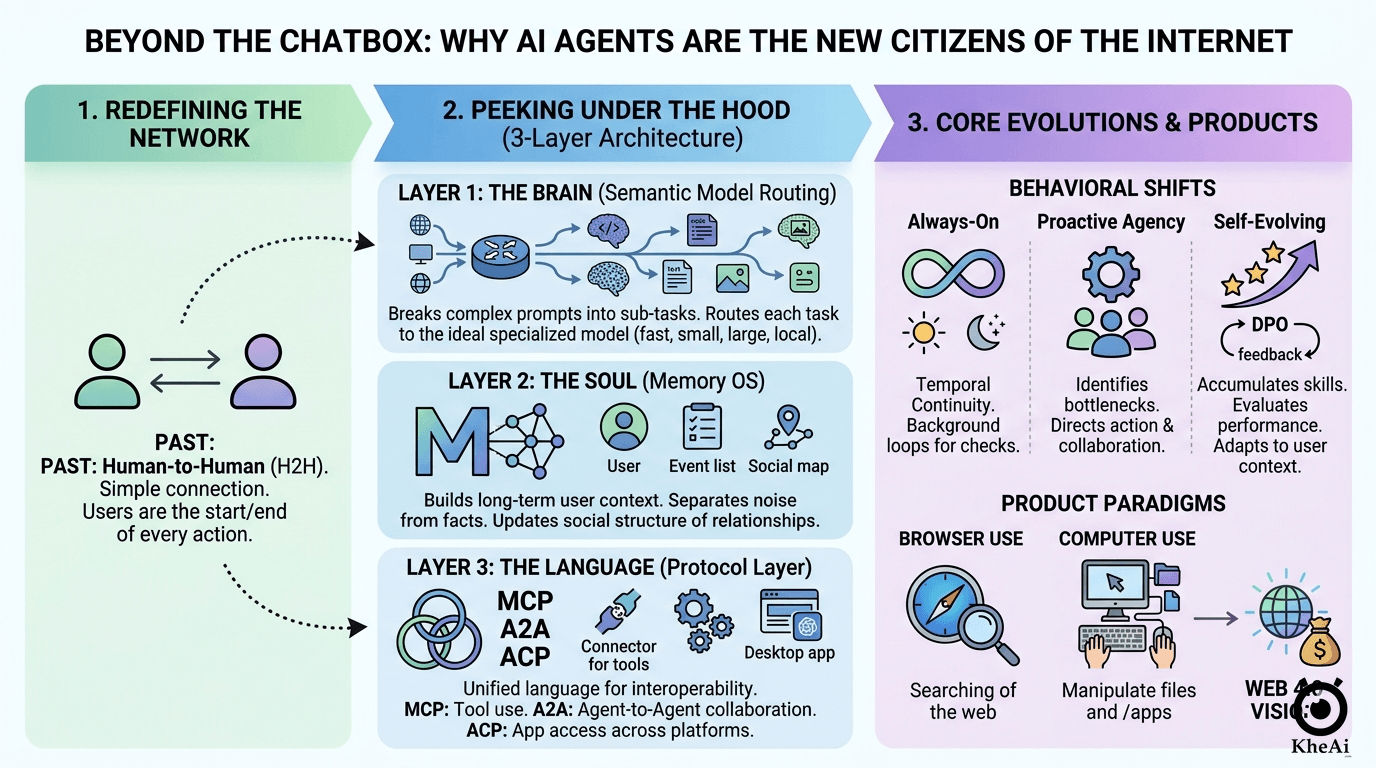

Step 1: Redefining the Network (The “Aha!” Moment)

Look at how we communicate today. On WhatsApp, Slack, or email, you are the start and end of every action. You read, you process, you reply.

In an Agentic Social Network, the fundamental unit of interaction shifts. Your AI agent becomes your proxy—a persistent, 24/7 digital entity. Imagine two CEOs setting up a meeting; they don’t check each other’s calendars, their executive assistants do that heavy lifting, aligning schedules and filtering the noise so the humans only meet for the final decision.

That is what’s happening to our digital lives. Human-to-Human (H2H) connection is getting a complex new layer: Agent-to-Agent (A2A) communication. But how do we actually build a system capable of being your proxy? It boils down to a three-layer architecture.

Step 2: Peeking Under the Hood (The 3-Layer Architecture)

To make an AI truly “agentic,” being smart isn’t enough. It requires a sophisticated system engineering approach.

Layer 1: The Brain (Semantic Model Routing)

We used to think the goal was to build one massive “God model” that could do everything. That turned out to be too slow, too expensive, and highly inefficient.

Today’s cutting-edge systems use Model Routers. Think of this as a traffic controller for AI. When you give your agent a complex task, the router breaks it down. It might send the coding portion to a specialized programming model, the creative writing to another, and simple logic checks to a small, fast local model running right on your device.

The magic here is Semantic Routing—the system evaluates your prompt across multiple dimensions (cost, speed, privacy, safety) in milliseconds to choose the perfect combination of brains for the job.

Layer 2: The Soul (The Memory OS)

If an AI forgets who you are every time you close the tab, it’s just a tool. Memory is what transforms a tool into a companion.

We are moving past basic database lookups into full-fledged Memory Operating Systems. This layer does three critical things:

- Progressive Disclosure: Instead of drowning the AI in your entire life history, it dynamically reveals only the context relevant to the task at hand.

- Continuous Updating: It separates short-term noise from long-term facts, constantly rewriting its understanding of your preferences.

- The “Social Brain”: It maps your relationships. Your agent learns that John is your boss and Sarah is your sister, adjusting its tone and urgency based on who is involved in the network.

Layer 3: The Language (The Protocol Layer)

For agents to do actual work, they need a standardized way to talk to the outside world and to each other.

- MCP (Model Context Protocol): This is the bridge to the real world. It allows your agent to safely browse the web, read your calendar, or pull data from your company’s CRM.

- A2A (Agent-to-Agent): The rules of engagement when your agent needs to negotiate with a vendor’s agent.

- ACP (Agent Client Protocol): This decouples the agent from a single app. Your agent shouldn’t just live in a chat interface; ACP allows that exact same agent to help you directly inside your code editor, your email client, or your design software.

Step 3: The Three Evolutionary Leaps of AI

Because of that architecture, AI agents are undergoing three massive behavioral shifts that will completely change how we work.

1. From Stateless to “Always-On” (Temporal Continuity)

You don’t just “ping” a modern agent. Through infinite loops and background processing, they maintain temporal continuity. They sense your environment. If your agent detects through your GPS that you are commuting, and through voice recognition that you sound exhausted, it can proactively summarize the 50 unread messages in your family group chat into a single, digestible sentence by the time you walk through your front door.

2. From Reactive to Proactive (True Agency)

We are moving past the era of “Prompt Engineering.” Soon, treating AI like a search engine will feel incredibly outdated. Agents are becoming first-class members of our digital groups. They don’t wait for instructions; they identify bottlenecks. If a project is stalling, a proactive agent might jump into a Slack channel, suggest a division of labor, draft the initial framework, and even “hire” other specialized AI agents to execute sub-tasks.

3. From Static to Self-Evolving (Harness Engineering)

This is where it gets wild. Through a process called Harness Engineering, agents are developing a compounding interest in their own intelligence.

When an agent successfully navigates a complex task—say, researching a niche market—it doesn’t forget how it did it. It abstracts that successful workflow into a saved “Skill.” Through mechanisms like Direct Preference Optimization (DPO), the agent evaluates its own performance, learns from its mistakes, and refines its strategies. The more you use it, the more it adapts to your specific industry’s nuances. It stops being a generic model and becomes a hyper-specialized veteran of your specific workflow.

Step 4: Where Does This Leave Us? (The Path to Web 4.0)

As these agents mature, their execution is splitting into two main paths:

- Browser Use: Agents that navigate the web for you (booking flights, scraping research).

- Computer Use: Agents that take control of your operating system—opening Excel, moving files, sending emails, and executing cross-application workflows as if a ghost were moving your mouse.

Ultimately, this is pointing us toward Web 4.0—an internet where AI agents are native citizens. They will have their own wallets, pay for APIs, negotiate contracts, and execute micro-transactions autonomously.

The Bottom Line: Embracing the Messy Middle

Giving autonomous agents the ability to read private group chats, execute computer commands, and spend money is a massive security headache.

There are two schools of thought on safety right now: the traditional approach (lock everything down with strict permissions) and the AI-native approach (teach the AI the legal and ethical boundaries, like the GDPR, and let it self-police).

History tells us that massive technological shifts are messy. When cars were first invented, we didn’t have traffic lights or paved roads—we had to put the technology out there, see the chaos, and then build the guardrails. We are in that exact moment with Agentic AI.

We are moving past the novelty phase. The agents are here, they are building memories, and they are learning to talk to each other. The question is no longer if you will use an AI tool today, but how you will manage your AI proxy tomorrow.